LLM Cyberoffense Beneficiaries

The AI that finds bugs before hackers do and why the market has it backwards

Last week, a researcher at Anthropic named Nicholas Carlini gave one of the quieter alarming talks you’ll hear from anyone inside a frontier AI lab.

The short version: he and his team have been using Claude to autonomously find real, undiscovered security vulnerabilities in critical software. No custom tooling. No security expertise required. Just a prompt that says “find the most serious vulnerability you can,” and the model goes to work.

What it found was not trivial. A popular content management system with 50,000 GitHub stars — never had a critical bug in its history. Claude found the first one, then wrote a working exploit from scratch that extracted admin credentials with zero authentication. Carlini wrote none of that code.

More significant: the Linux kernel. One of the most battle-hardened pieces of software on earth. The model found a remotely exploitable heap buffer overflow in the NFS network file system daemon — a bug requiring understanding of a multi-step interaction across a network protocol handshake that no fuzzing tool would ever surface. It has been sitting in the kernel since 2003. It predates git.

The capability cliff is recent. Models from six months ago couldn’t reliably do this. The models released in the last three to four months can. The doubling time on AI task autonomy is running at roughly four months.

Why the US government will move fast on this

The same capability that finds vulnerabilities for defenders finds them for attackers. If you can deploy an AI that autonomously scans any software in the world for exploitable flaws — without needing elite human researchers — you have just handed one actor a structural offensive cyber advantage.

The US currently sits at the frontier. China is an estimated six to nine months behind, partly constrained by export controls on the compute clusters required to train frontier models. That gap is real and closing.

The DoD doesn’t need convincing. What’s happening now — quietly — is accelerated classified procurement across AI-native security vendors and government integrators who can deploy this in cleared environments. That spending will show up in contract awards and revenue over the next twelve to twenty-four months. We are inside that window.

The market’s mistake

Here’s the irony. The narrative driving cybersecurity stocks lower has been: AI will disrupt cybersecurity companies. The fear is that AI automates security work, compresses headcount, and reduces the need for enterprise software. Qualys, Rapid7, and Tenable all dropped sharply this week on that thesis. SentinelOne hit a multi-year low in February. CrowdStrike is down roughly 25% from its November peak.

The market has the causality exactly backwards.

AI is not compressing demand for enterprise security software. It is dramatically expanding the attack surface those companies must defend. When AI can generate hundreds of kernel-level vulnerabilities faster than any human team can validate and patch them — which is literally what Carlini described, noting he has “several hundred crashes I haven’t had time to validate yet” — organizations need better security tooling, not less of it.

We ran earnings call transcripts across every major cybersecurity name for the last two quarters, filtering specifically for executives who spoke about this problem in concrete terms — not generic “AI enhances our platform” marketing language. The findings were clear and they point to specific names.

The winners — and why

CrowdStrike CRWD 0.00%↑

CrowdStrike’s CEO George Kurtz disclosed something on an earnings call that barely made headlines: China state-sponsored actors were already using public LLMs to build what he called “AI-type malware.” Not traditional executables — prompts that instruct a system to look around, identify what’s interesting, and generate scripts on the fly. Unique every time they hit a new system. No static signature to catch.

This is Carlini’s thesis playing out in real enterprise environments, right now, confirmed by the CEO of the largest pure-play cybersecurity company in the world.

CrowdStrike’s structural advantage here is their data moat. Their Falcon platform sits on sensors across hundreds of thousands of endpoints globally, generating labeled telemetry that no LLM provider can replicate with a generic model. Charlotte AI — their autonomous SOC analyst product — is growing over 85% annually and now runs end-to-end security workflows without human intervention. That’s the right architecture for a world where attacks arrive faster than analysts can triage them. Down ~25% from peak on growth deceleration fears, this is the clearest entry point in the large-cap names.

Palo Alto Networks PANW 0.00%↑

PANW has the most comprehensive AI threat taxonomy of any vendor in the space. Their Unit 42 threat research team published data showing end-to-end cyberattacks are now 4x faster than a year ago, with nearly a quarter of breaches resulting in data exfiltration in under an hour. That’s the real-world version of Carlini’s capability cliff hitting enterprise environments.

What sets PANW apart is forward-looking product specificity. CEO Nikesh Arora named MCP server hijacking — a vector most security researchers hadn’t publicly identified yet — as an emerging attack surface. Their Prisma AIRS 2.0 platform includes autonomous AI red teaming, real-time agent defense against prompt injection, and deep model inspection. They are not reacting to the AI threat; they mapped it before it became consensus. At roughly $147 versus a consensus analyst target around $215, the discount is real despite the premium multiple.

SentinelOne S 0.00%↑ — highest asymmetry

SentinelOne is the most interesting risk/reward in the basket. The stock hit a multi-year low in February, trades at roughly 3.5x forward sales — a fraction of CrowdStrike and PANW — and carries an average analyst price target implying nearly 50% upside from current levels.

The bear case is that AI disrupts endpoint security and larger platforms consolidate the market. The bull case has a very specific piece of evidence: a top frontier AI lab — almost certainly one of the organizations building the exact models Carlini was demonstrating — selected SentinelOne’s Singularity platform to protect its own mission-critical infrastructure and model development environment. The architects of the offensive threat chose SentinelOne to defend against it. That is third-party validation you cannot manufacture with marketing spend. If the labs building the attack surface are buying S1 to protect themselves, the market’s AI disruption narrative is probably wrong about this name specifically.

Zscaler ZS 0.00%↑

Zscaler’s CEO didn’t speak in hypotheticals. He disclosed a real incident on an earnings call: a large AI company had its coding assistant hijacked by bad actors, who used it to autonomously execute a large-scale cyberattack across multiple organizations. This is the scenario Carlini described — AI-assisted lateral movement at scale — already happening.

What makes Zscaler uniquely positioned is their inline network vantage point. They process over 500 billion transactions daily — more than 20 times Google’s search volume — which means they see AI-assisted attack traffic in real time, at the network layer, before it reaches endpoints. Their acquisition of Red Canary layered agentic SecOps AI on top of that data fabric, enabling threat detection that the CISO of a Fortune 100 company said was “finding things every month we aren’t able to find ourselves.” That combination of inline position plus agentic response is the right architecture for catching AI-generated exploits before they land.

Qualys QLYS 0.00%↑

Qualys is the most operationally specific name in the cohort when it comes to solving the exact problem Carlini described — the patch pipeline overwhelm. Their platform doesn’t just find vulnerabilities. It runs an autonomous loop: detect the vulnerability, validate whether it’s actually exploitable in your specific environment, apply the remediation, then re-verify the fix worked. All without a human in the loop. Their CEO called this out directly: “You cannot show up for the AI fight today with your Jira tickets. You have to be able to do automation and autonomous decision-making.”

That’s the answer to a world where AI generates vulnerabilities faster than security teams can process them. Qualys caught in the broad sector selloff creates an entry point in a name with arguably the most complete autonomous remediation product.

Booz Allen Hamilton BAH 0.00%↑

Booz Allen is the government infrastructure play in this thesis. They are already delivering AI capabilities for classified missions at the Defense Intelligence Agency, the Army National Guard, and across DoW/DoD, and they have the cleared workforce and mission relationships to be the primary integrator of AI-assisted cyber capability in classified environments.

What distinguishes them from other defense contractors is a named product: Vellox Reverser, their AI-native malware reverse engineering platform that performs fully automated analysis in minutes versus days of traditional work. Their CEO explicitly called AI-enhanced cyberattacks “one of the primary threats of 2026” — unusually direct language for a defense contractor. As classified DoW/DoD spending on AI cyber capability accelerates, Booz Allen is the most direct conduit for that spending in the public markets.

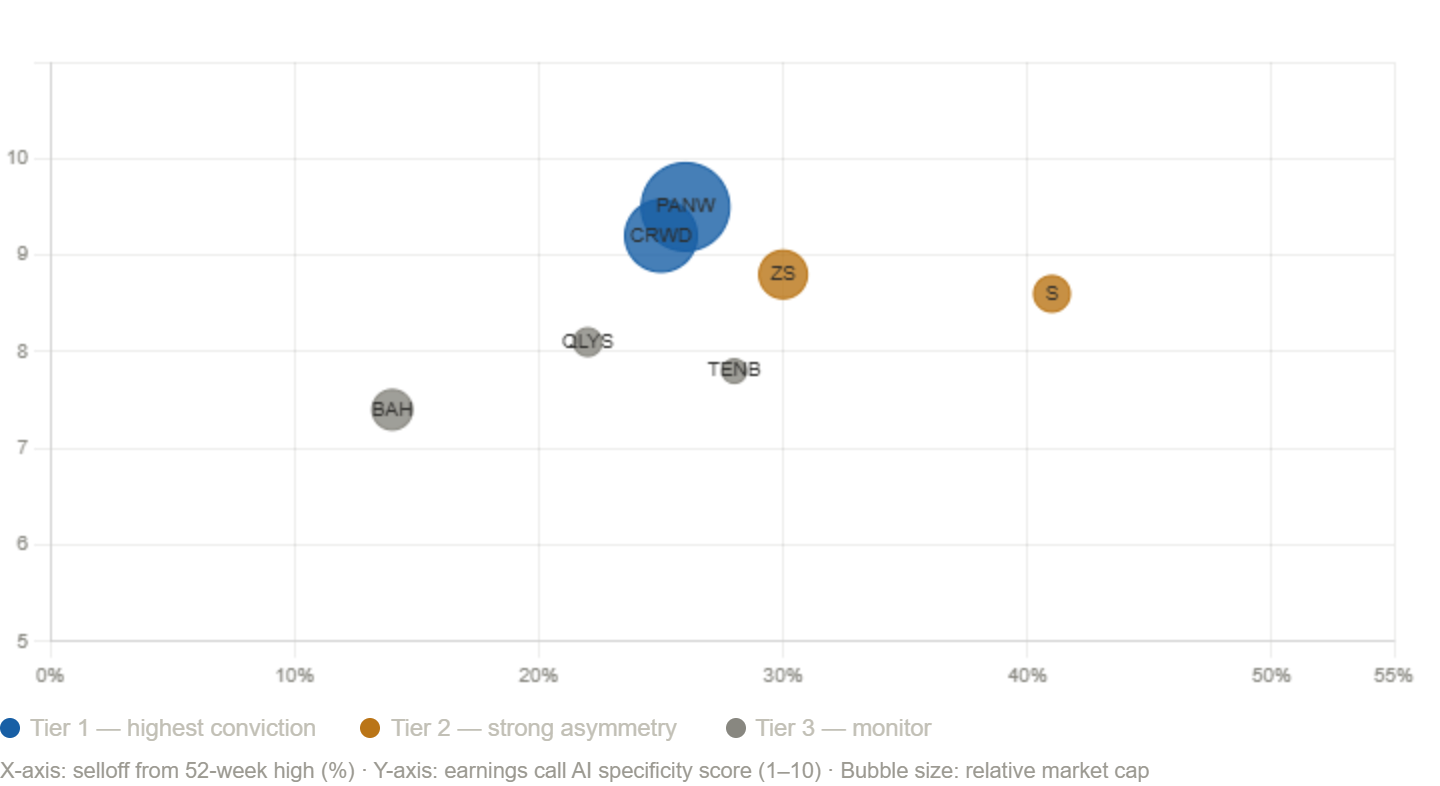

The chart — dislocated vs. how specifically management understands the threat

Below is where these names sit on valuation versus the conviction of their AI-specific evidence — the stocks with the strongest earnings call language and the most dislocated prices are in the top-right.

The bottom line

Carlini closed his talk with a practical admission: he has hundreds of Linux kernel vulnerabilities he can’t responsibly disclose because his team can’t process them fast enough. AI found them faster than humans can validate them.

That gap — between AI-generated vulnerability discovery and human capacity to patch — is the central problem these companies exist to solve. It is about to get dramatically harder. The market is selling the companies that solve it because it thinks AI will make them irrelevant. The companies themselves, and the researcher at Anthropic who gave this talk, believe the opposite.

One side is right.

Disclaimer: This is for educational purposes only. NFA.